"The amount of power to run compute by 2045 will be the base power of the planet right now. The drain on resources is so high, you need to put that compute in space and use the power of the sun...that's a really good use of space to help save the planet."

Tom Mueller, employee #1 at SpaceX Tweet

What if the future of computing was not on Earth… but over our heads?

The explosion of artificial intelligence is pushing our terrestrial data centers to the limit. Electrical power, water for cooling, physical space… everything is running short. And as we approach an energy bottleneck, a bold idea is starting to take hold: moving the cloud into space.

The spark for this exploration came from the article “AI & Quantum” by Peter H. Diamandis (Metatrend #2, Nov. 25), which lays out a clear vision: the infrastructure that supports AI today is not scalable without rethinking everything. So I went diving… and found that there are already companies launching servers into space. Literally.

Why upload data centers to space?

Uninterruptible solar power

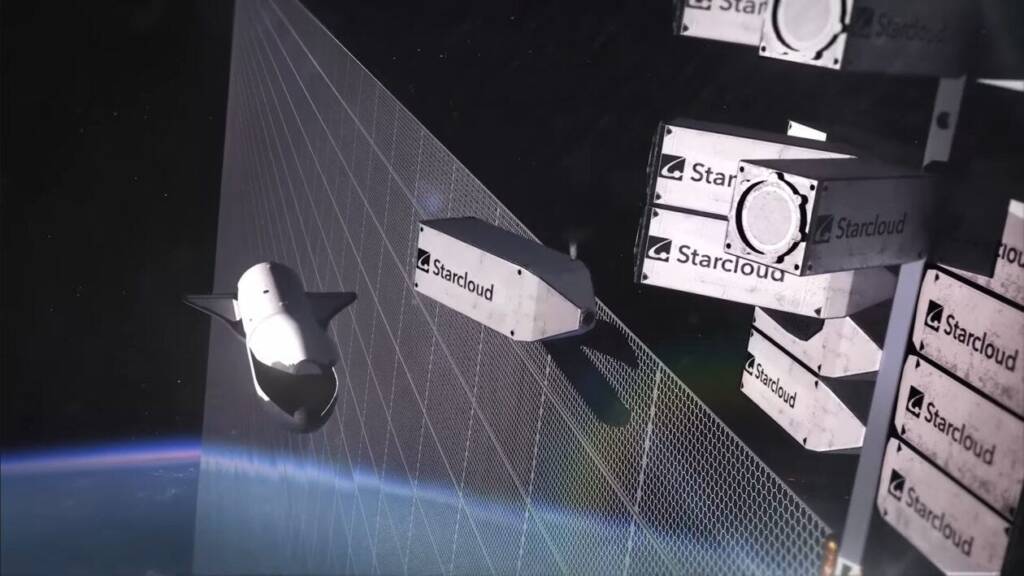

In space, there is no night and no clouds: satellites can capture sunlight 24/7. This enables data centers to operate on a constant power supply, without relying on the terrestrial power grid. According to the startup Starcloud, orbital electricity could be up to 10 times cheaper than ground-based electricity.

Waterless cooling

Data centers consume millions of liters of water per day for cooling. In orbit, the vacuum acts as a natural radiator, dissipating heat into space without the need for cooling towers or water resources. An elegant and sustainable solution to an increasingly urgent problem.

Expansion without physical limits

On Earth, you need land, permits, power lines, and environmental regulations. In space, growth means launching more modules. No annoying neighbors or urban planning restrictions.

Reduced ecological footprint

An orbital data center does not use water, nor does it emit CO₂ during its operation. Except for the initial launch, its environmental impact is very low. And as reusable rockets (like Starship) improve, their costs are also falling.

So why don't we do it now?

Cooling is still complicated

While vacuum helps, dissipating heat on a large scale requires huge radiators and fine thermal management. One mistake and the chips overheat. There is no margin for failure up there.

Space radiation

Outside the atmosphere, the components are exposed to cosmic rays, solar particles, and micrometeoroids. They need shielding, redundancy, and robust design to survive years of operating alone.

Launch cost and life cycle

Launching kilograms into space is still expensive, even though prices have been declining every year. And you can’t “do maintenance”: if something fails, you have to replace the satellite. That’s why they are designed to last 3-5 years before being replaced by new versions.

Latency and bandwidth

Uploading and downloading data to the space takes time. For massive AI training, it may work, but for real-time tasks (such as online games or trading), latency is still an obstacle.

What does this mean for AI?

Space data centers could free AI from terrestrial energy limits. For tasks such as training giant models, simulations, or big data analysis, this on-orbit solar computing may be just what we need.

In addition, a new category would be born: the “orbital green cloud”. An AI service with a smaller ecological footprint, greater resilience, no need for permits or local infrastructure.

Startups like Starcloud, in partnership with Crusoe and with support from NVIDIA, are already testing satellites with next-generation GPUs. The vision is clear: in 10 years we could have a constellation of data centers floating around the planet, processing AI in a clean and scalable way.

Starcloud is a startup based in Redmond that has set out to take data centers into space to solve AI’s biggest bottleneck: power. Its first satellite, Starcloud-1launched in 2025, is a module weighing about 60 kg with a NVIDIA H100 GPU on board, capable of offering up to 100 times more computing power than any previous space mission and running solely on solar power and vacuum radiation cooling. The mission is still an “on-orbit laboratory” to validate that it is possible to operate data center hardware in extreme conditions, but its roadmap aims to multi-gigawatt data centers powered by giant solar arrays and a public cloud in space, in collaboration with Crusoe, which plans to offer commercial GPU capability from orbit starting in 2027.

Moving our data centers up into space sounds radical, but it may be the most logical thing to do if we want to continue to scale artificial intelligence without depleting the planet’s resources.

Yes, many challenges remain: heat, radiation, costs, regulation. But if we can solve them, we could be looking at a new frontier of global digital infrastructure. And who knows… maybe in a few years, when you ask your AI assistant for something, you’ll be communicating with a server orbiting the Earth 500 km above, bathed in the eternal sun of space.

Will we see it soon? Everything points to yes.

Is it worth betting on, and do you see it as a possibility?

Leave me your comments, I’d love to read them

Have a good week!

Sources:

Data Centers in Orbit – Metatrend #2: AI & Quantum (Peter H. Diamandis) Substack

How Starcloud Is Bringing Data Centers to Outer Space (NVIDIA Blog) NVIDIA Blog

Starcloud-1 satellite reaches space, with Nvidia H100 GPU now operating in orbit (DataCenterDynamics) DataCenterDynamics

StarCloud Wants to Move the World’s Data Centers to Space (AIM Media House) Aim Media House

Crusoe to Become First Cloud Operator in Space Through Strategic Partnership with Starcloud (GlobeNewswire) GlobeNewswire

Crusoe to deploy in Starcloud satellite data center in late 2026, offer ‘limited GPU capacity’ in space from 2027 (DataCenterDynamics) DataCenterDynamics

How Will Crusoe and Starcloud Build Data Centres in Space? (Data Centre Magazine) Data Centre Magazine

Crusoe and Starcloud’s Solar-Powered Data Centers in Space (Energy Digital) Energy Digital

Crusoe to Become First Cloud Operator in Space Through Partnership with Starcloud (Techstrong) Techstrong IT

NVIDIA’s H100 GPU Takes AI Processing to Space (IEEE Spectrum) IEEE Spectrum

Jeff Bezos envisions space-based data centers in 10 to 20 years (Reuters) Reuters

Jeff Bezos: Why Space Could be the Future of AI Data Centers (AI Magazine) AI Magazine