Just a few days ago, something happened that I’ve been waiting for weeks. Jensen Huang, the CEO of NVIDIA, took the stage at GTC 2026 in San Jose in his usual leather jacket… and for almost three hours, he left us all open-mouthed.

I am not exaggerating.

What was announced at this event is not a simple product update. It’s one of those things that you look at and think, “Okay, the world just changed a little bit.” And I think a lot of people haven’t realized that yet.

Let’s break down everything that was announced. It’s dense, I warn you. But it’s worth it.

Vera Rubin: the beast that replaces Blackwell

Let’s start with the big stuff.

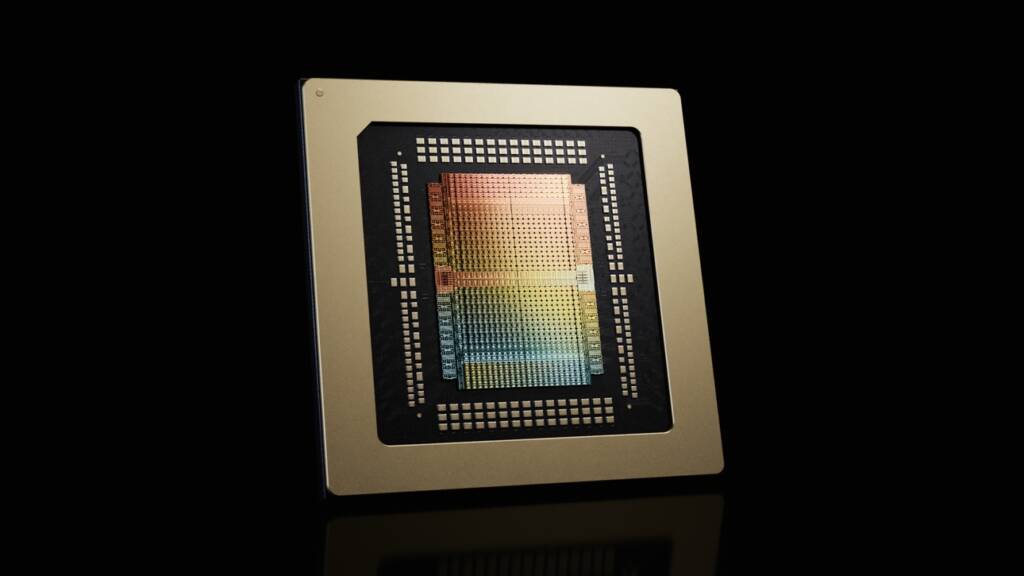

NVIDIA has officially unveiled the Vera Rubin architecture, the successor to Blackwell. And the numbers are… well, you be the judge.

336 billion transistors per GPU. Three hundred and thirty-six billion. Blackwell had 208 billion, so we’re talking about a 60% jump in a single generation. Manufactured on TSMC’s 3-nanometer process, full node of difference.

But what got me thinking was something else. The inference performance: 50 petaflops on NVFP4.

Five times more than Blackwell.

Let me repeat that because I think it’s not sized right: five times. In one generation. There are people who have been waiting years for 20-30% improvements, and here they plant you a 5x as if nothing.

Each GPU comes with 288 GB of HBM4 memory with a bandwidth of 22 terabytes per second. And the accompanying CPU, simply called Vera, has 88 ARM cores, and its only job is to keep the GPU cluster running at peak performance around the clock. No more, no less. A CPU at the service of GPUs.

The complete system, the Vera Rubin NVL72, packs 72 of these GPUs into a single rack. What NVIDIA says – and here it’s up to you to judge how much you believe it – is that this will reduce the cost per inference token by up to 10 times.

When does it arrive? Second half of 2026. AWS, Google Cloud, Azure and Oracle are already on the list.

Groq's acquisition: 20 billion for speed

And here comes one of the boldest moves I’ve seen in tech in a long time.

NVIDIA bought Groq in December 2025 for $20 billion. For those who don’t know, Groq has revolutionized inference with its LPU, an SRAM-based architecture capable of generating text at speeds that seem like science fiction. Speeds that made you wonder if your internet connection had mutated.

Well. Huang unveiled Groq 3, the first chip born of that acquisition. An LPX rack with 256 LPUs designed to sit alongside the Vera Rubin and handle real-time inference for AI agents.

The stat that nailed it for me: that rack can deliver 35x the token throughput per watt over Rubin GPUs alone. 35x. And the speed target is 1,500 tokens per second for agent-to-agent communications.

Basically, NVIDIA has split the AI world into two halves: training (Vera Rubin) and ultrafast inference (Groq). It’s like having one brain for thinking and one brain for talking. Each does what it does best.

The question I have to ask myself is if this doesn’t end up making NVIDIA too big. Too much of a must-have. But that’s what I talk about below.

One billion dollars. With B.

There is one piece of information that overhangs the entire keynote and changes everything.

Jensen Huang said, with that calmness of his that is sometimes a little scary, that he expects combined orders from Blackwell and Vera Rubin to reach $1 trillion by 2027. A trillion in English, so there’s no confusion.

One billion.

NVIDIA’s FY2026 data center revenue was already 193.5 billion. Which is already a figure that is hard to process. But a billion in orders is another galaxy altogether.

And that tells you something we sometimes forget: the demand for AI computing is not going down. It’s not moderating. It’s accelerating. Governments, big tech, startups, companies in every industry… everyone wants more GPUs. And NVIDIA is, at the moment, the only one that can deliver at that scale.

The ChatGPT moment of the autonomous car

Huang put it this way, literally: “The ChatGPT moment of autonomous driving has arrived.”

Big phrase. We’ll see.

The specifics: massive expansion of the DRIVE Hyperion 10 platform for Level 4 autonomous vehicles. The new partners are BYD, Hyundai, Nissan, and Geely, which, between them, sell a huge number of cars.

But what really caught my attention was the partnership with Uber. Fully autonomous robotaxi services in Los Angeles and San Francisco by the first half of 2027. And the plan is to expand to 28 cities worldwide by 2028.

Twenty-eight cities. In two years.

I don’t know if that’s exactly right or there’s some hype, because the promises of autonomous driving have been… optimistic, shall we say, for years. But if NVIDIA backs its infrastructure and Uber backs its distribution network, it looks more likely to work than previous attempts. At least on paper.

NemoClaw: the operating system for AI agents

Another announcement that went a bit unnoticed among so much hardware but I think it’s going to be huge.

First, the context. OpenClaw has become the fastest-growing open source project in history. It’s basically a framework for creating and running AI agents locally, on your own hardware. Without relying on anyone’s cloud. If you read my article on Moltbook, you know what this is all about and the vulnerabilities it entails.

NVIDIA has built NemoClaw on top of OpenClaw– an enterprise platform to run these agents securely on the new RTX PRO 6000 workstations, which have up to 4,000 TOPS of local AI compute and 96 GB of GPU memory.

In addition, Huang announced the Nemotron Coalition, an alliance with Perplexity, Mistral AI, LangChain and others to develop open frontier models. This is NVIDIA’s answer to the closed OpenAI ecosystem: open models, running locally, on NVIDIA hardware.

For me, this is the announcement that will have the most impact in the medium term. Being able to run powerful AI agents on your own machine without sending your data to any external server… that takes away the excuse many companies use today for not using AI because of privacy issues. Well, at least in theory. Implementation is another story as usual.

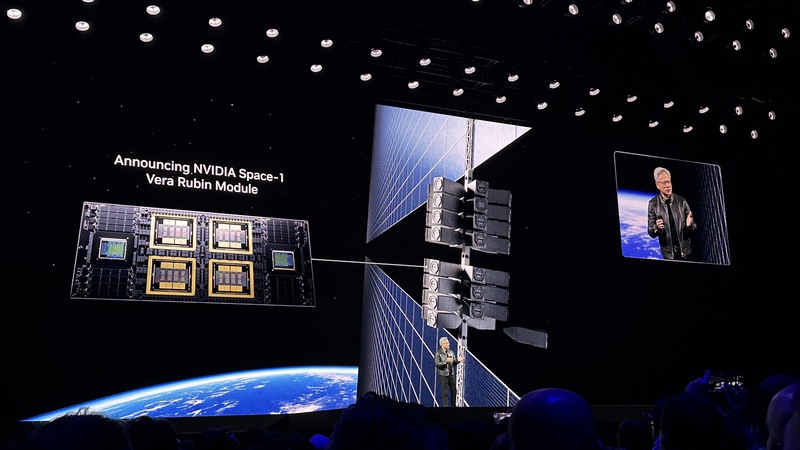

Data centers in space. Yes, in space.

And just when you thought you’d seen it all, Huang pulls this out.

NVIDIA introduced the Vera Rubin Space-1. A computing module specifically designed to operate in orbit. Orbital data centers. I read it and still struggle a bit.

The module combines two Rubin GPUs with a Vera CPU in an architecture that offers up to 25 times more AI computing than the H100, adapted for space conditions: radiation, extreme temperatures, vibration… Partners already working on this include Aetherflux, Axiom Space, Planet Labs, and Kepler Communications. The idea is to process data from satellites and sensors directly in orbit.

Sounds like science fiction, I know. But when NVIDIA announces something with partners and specific dates, it’s usually serious. When will we see data centers working in orbit? That’s another question. Honestly, it makes me a little giddy to think about it.

Recall that I discussed such on-orbit deployments in my post “On-orbit data centers: the ultimate cloud for the AI era?” In fact, the renders Nvidia used in the presentation at this point were from StarCloud.

DLSS 5: when AI paints the pixels

For those of us who are not only professionals but also gamers -there are many more of us than people think-, NVIDIA introduced DLSS 5.

It is not an upscaler like previous versions. It is a real-time neural rendering model that generates photorealistic lighting and materials from AI. AI is literally painting pixels with information about how light works in the real world. Digital Foundry, which doesn’t usually give away praise, said it’s the most amazing thing they’ve seen in a long time.

It will arrive in the fall of 2026.

And what about after Vera Rubin?

As it turns out, NVIDIA already has an answer. It’s called Kyber.

Huang showed a prototype of the next architecture: 144 GPUs organized in vertical trays – not horizontal as up to now – to increase density and reduce latency. It is called Vera Rubin Ultra and will arrive in 2027.

They are already working on the next generation of the one they just announced. That’s the speed at which this is moving. And the speed at which you have to move if you don’t want to be left out.

GTC 2026 will be remembered as one of the most important events in recent technology history. And it’s not because of one announcement, it’s because of everything together. NVIDIA is no longer a graphics card company. We already knew that. But it’s now building the infrastructure on which all the AI on the planet will run. Including the one that’s going to run outside of it, in orbit.

With a trillion in expected orders and the purchase of Groq for 20 billion, Huang is playing at a level few CEOs have reached. That much is undeniable.

Risks? There are, of course. That the world is so dependent on one company for all AI computing worries me. AMD, Intel, Google and Amazon’s own chips… competition exists but it’s behind. And the valuations are so high that any stumble is going to hurt.

But right now, if you ask me who sets the pace… I have no doubt.

Do you think NVIDIA can keep up this pace or is this a bubble that will burst sooner rather than later? I would love to read your opinions.

Have a good week!

Article sources:

- CNBC – “Nvidia GTC 2026: CEO Jensen Huang sees $1 trillion in orders for Blackwell and Vera Rubin through ’27” (Mar 16, 2026)

- Tom’s Hardware – “Nvidia GTC 2026 keynote live blog – Vera Rubin GPUs and CPUs, DLSS 5” (16 Mar 2026)

- TweakTown – “NVIDIA unveils Vera Rubin at GTC 2026” (16 Mar 2026)

- StorageReview – “NVIDIA GTC 2026: Rubin GPUs, Groq LPUs, Vera CPUs” (16 Mar 2026)

- Techzine – “Nvidia’s Groq 3 LPU targets agentic AI inference at GTC 2026” (16 Mar 2026)

- NVIDIA Newsroom – “NVIDIA Makes the World Robotaxi-Ready With Uber Partnership” (16 Mar 2026)

- Tom’s Hardware – “Nvidia announces Vera Rubin Space Module” (16 Mar 2026)

- NVIDIA Newsroom – “NVIDIA Launches Space Computing” (16 Mar 2026)

- TechCrunch – “NVIDIA’s DLSS 5 uses generative AI to boost photorealism” (Mar 16 2026)

- Tom’s Hardware – “Nvidia’s Nemotron coalition brings eight AI labs together” (16 Mar 2026)