This week Google finally launched its long-awaited Gemini AI Model, designed from the ground up with native Multi-modal capabilities.

The launch has not left anyone indifferent as it could represent a step forward in the development of Artificial Intelligence, allowing. Google (in theory), to take the lead, one step ahead of OpenAI, its main competitor.

I now understand the numerous launches made by OpenAI in previous weeks, trying to achieve a coup and a positioning as a leader in the AI market. With this launch Google seems to match expectations as little,

DeepMind: The mastermind behind Gemini

Gemini is a product of DeepMind, an artificial intelligence research company founded in 2010 by Demis Hassabis, Shane Legg, and Mustafa Suleyman. Since its inception, DeepMind has made significant contributions to AI, including the development of AlphaGo, the first chess program to defeat a world champion without human help, and AlphaFold, the first program to predict protein structures with human accuracy.

DeepMind was acquired by Google in 2014 and has continued to conduct pioneering research in AI ever since. Gemini is one of their latest achievements and represents an important step towards creating AI systems that can interact with humans more naturally and effectively.

But, let’s see what capabilities Gemini allows us, and the best way to do it is by watching the official video of its launching where its multi-modal capabilities are already shown, or “anything to anything” as Google puts it.

Comparison of Gemini with its LLM peers

Gemini’s multimodal nature distinguishes it from other LLMs, which typically excel in one or the other of text or voice processing. This unique ability allows Gemini to switch seamlessly between these modalities, enabling it to:

- Synthesize human-quality speech from text instructions: Gemini can read any text instruction and generate a corresponding audio file, making it ideal for creating interactive tutorials, podcasts, and other audio-visual content.

- Translate languages in real-time: Gemini can translate between languages while maintaining a smooth and natural conversational flow, enabling seamless communication between languages.

- Answer complex questions interactively: Gemini can participate in open conversations, providing answers to questions and asking additional questions to clarify user queries.

Capabilities that expand human-computer interaction

Gemini’s capabilities theoretically go beyond text and speech processing. You can also:

- Generate creative text formats: You can produce various creative text formats, including poems, code, scripts, musical pieces, e-mails, and letters.

- Compose different types of creative content: You can generate different types of creative content, such as blogs, articles, marketing materials, and other forms of written content.

- Answering open-ended, challenging or strange questions: You can handle open-ended, challenging, or strange questions, even those that require several steps to answer.

But is this demo real? Does it go beyond buying the rumor and selling the news?

This is a big question, as we may be looking at a video that does not exactly match the actual capabilities of the Model. In fact, if we go deeper into how this demo was prepared we will see that it does not seem that this “conversation” with the model is real. DotCSV has explained it perfectly in the second video it recently released.

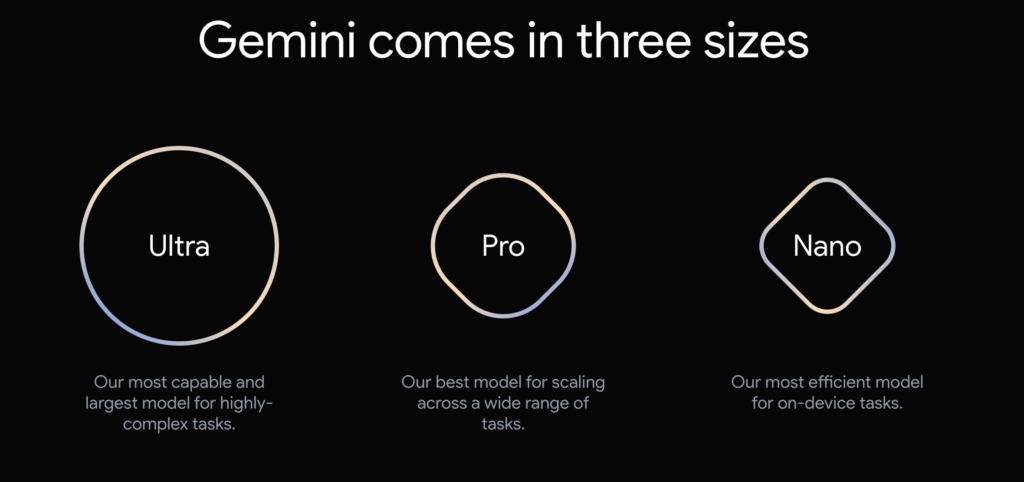

Product versions

Ultra: The ultimate power for demanding tasks

Gemini Ultra is the most powerful version of Gemini, designed to handle large-scale and complex natural language processing tasks. With a capacity of 176 billion parameters, it is capable of addressing text-to-speech and speech-to-text problems in an exceptional manner, as well as generating high-quality creative content. Gemini Ultra is ideal for applications that require maximum performance and an unprecedented level of precision.

Pro: Versatility for a wide range of scenarios

Gemini Pro focuses on delivering a balanced combination of performance and efficiency, making it a versatile choice for a variety of applications. With a capacity of 60 trillion parameters, Gemini Pro delivers solid performance on a wide range of tasks, from translating languages to answering questions interactively. Gemini Pro is ideal for applications that require a balance between performance and efficiency, such as virtual assistance systems and advanced chatbots.

Nano: Compact solutions for limited devices and applications

Gemini Nano is specifically designed for resource-constrained devices and applications, such as smartphones and handheld devices. With a capacity of 0.6 billion parameters, Gemini Nano offers an efficient and compact natural language processing experience without sacrificing quality and performance. Gemini Nano is ideal for applications that require a small model size and reduced power consumption, such as embedded voice assistants and pocket translation applications.

A future focused on voice interfaces

The future of Gemini and multi-modal LLMs lies in their ability to create natural and intuitive voice interfaces.

This is one of the topics I am most passionate about since moving from keyboard-based interactions to more fluid voice-based interactions can be a qualitative leap in human-machine interaction.

As voice-based interactions become increasingly prevalent, the ability of these systems to seamlessly switch between text and speech will be invaluable in developing conversational artificial intelligence systems that can understand and respond to user requests in a human-like manner. The real Virtual Assistants (not the Siri on duty).

Extracting insights from scientific literature

This is one of the videos that fascinated me from this presentation. It shows how Gemini in a few hours processed 200,000 scientific papers, extracted the relevant data from them updated a table created manually by scientists over the years, and even updated a graph with the newly identified data. You can see this capability in the following video.

In scientific research (as in other scientific fields), AI is being a brutal accelerator of processes that will surely result in very rapid benefits for human beings that we will all be able to enjoy in the coming years.

Generation of different output formats depending on context

A priori, Gemini’s ability to create interfaces based on the answers it provides to user questions is one of its most unique and powerful features. This capability allows Gemini to not only provide informative and useful answers to user queries but also to dynamically generate interfaces tailored to specific user needs and preferences.

This is one of the features that surprised me the most in the first product launch videos where it was clear how Gemini’s response was different depending on the question asked. For example, the system generated image galleries in response to a question related to cooking recipes but switched you to another step-by-step interface when you asked for cooking instructions… If this functionality is true, we will have a major breakthrough ahead of us.

Here are some examples of how Gemini can theoretically use this capability:

- Create interactive tutorials: Gemini can generate step-by-step instructions for users to follow, along with interactive elements such as buttons and sliders, to make the learning process more engaging.

- Personalize news feeds: Gemini can analyze users’ preferences and interests to create a personalized news feed that highlights the articles that are most relevant to them.

- Custom dashboard design: Gemini can help users create custom dashboards that provide real-time data and insights about their business, projects, or personal goals.

- Generate interactive maps: Gemini can create interactive maps that allow users to explore data, routes, or geographic information in a visually appealing and informative way.

By leveraging its multi-modal capabilities and understanding of user needs, Gemini can seamlessly integrate text, graphics, and other interactive elements to create intuitive and engaging interfaces that enhance the user experience. This ability to create interfaces based on the answers it provides makes Gemini a valuable tool for a wide range of applications, from education and e-commerce to customer relationship management and data visualization.

However, I must say that for the moment, everything we have seen of the Model has been in videos and is not yet endorsed on the Bard model that we currently use. As its functionalities are deployed and we are able to test them, we will be able to endorse the capabilities of the new model.

If you want to try out some of these new capabilities in Bard, you can do so as long as you perform the prompts in English. This is something I don’t understand either, as the model should have been released in multiple languages….

I hope you found this information interesting.

Have a good week!

References

- Google Blog

- Google has quietly pushed back the launch of next-gen AI model Gemini until next year, report says (Business Insider)

- End of ChatGPT dominance? Google’s Gemini to launch this fall with significant upgrades

- Here’s what we know so far about Google’s Gemini

- Welcome to the Gemini era (DeepMind)

- Google Gemini is here, first impressions (DotCSV)

- Google Gemini: what is it, how does it work, differences with GPT and when can you use this artificial intelligence model?

- Google launches Gemini… and fails to impress again (Enrique Dans)

- What is Gemini, Google’s most advanced AI model? (Wired)

- [UPDATE] DECEPTION with the GOOGLE GEMINI DEMO (DotCSV)